Desktop: remove GEMINI_API_KEY, route proactive AI through /v4/listen (#5396)#5413

Desktop: remove GEMINI_API_KEY, route proactive AI through /v4/listen (#5396)#5413

Conversation

Greptile SummaryThis PR implements Phase 2 of the desktop proactive AI migration (#5396), routing focus detection from the Swift client through the existing Key changes:

Issues found:

Confidence Score: 3/5

Last reviewed commit: e8690fa |

| Dict with type, frame_id, status, app_or_site, description, message | ||

| """ | ||

| # Build context from user data | ||

| context = _build_context(uid) |

There was a problem hiding this comment.

Blocking synchronous I/O inside async function

Line 116 calls _build_context(uid) synchronously within the async def analyze_focus coroutine. The _build_context function (lines 62–93) makes three synchronous Firestore network calls — get_user_goals, get_action_items, and get_memories — without using run_in_executor. This blocks the event loop on every focus analysis request, degrading latency and throughput for all other concurrent WebSocket sessions.

Fix: Offload the blocking call to a thread pool executor:

loop = asyncio.get_event_loop()

context = await loop.run_in_executor(None, _build_context, uid)Or, if async variants of the database functions are available, convert _build_context to async and await each call individually in its own run_in_executor wrapper.

backend/routers/transcribe.py

Outdated

| if image_b64 and 'focus' in analyze_types: | ||

| async def _handle_focus(fid, img, app, wtitle): | ||

| try: | ||

| result = await analyze_focus( | ||

| uid=uid, | ||

| image_b64=img, | ||

| app_name=app, | ||

| window_title=wtitle, | ||

| ) | ||

| _send_message_event(FocusResultEvent( | ||

| frame_id=fid, | ||

| status=result['status'], | ||

| app_or_site=result['app_or_site'], | ||

| description=result['description'], | ||

| message=result.get('message'), | ||

| )) | ||

| except Exception as focus_err: | ||

| logger.error(f"Focus analysis failed: {focus_err} {uid} {session_id}") | ||

|

|

||

| spawn(_handle_focus( | ||

| frame_id, | ||

| image_b64, | ||

| json_data.get('app_name', ''), | ||

| json_data.get('window_title', ''), | ||

| )) |

There was a problem hiding this comment.

No rate limiting on screen_frame analysis tasks

Every incoming screen_frame message with "focus" in analyze_types immediately spawns a new background LLM vision task (line 2156). There is no throttling, debouncing, or per-user/per-session inflight limit. A high-frequency client could issue back-to-back screen_frame messages and trigger an unbounded number of concurrent Gemini vision API calls, causing significant cost blowout and potential backend overload.

Recommendation: Track an inflight state per user per session and skip or defer new requests while one is already in flight:

focus_in_flight = False

if image_b64 and 'focus' in analyze_types and not focus_in_flight:

focus_in_flight = True

async def _handle_focus(fid, img, app, wtitle):

nonlocal focus_in_flight

try:

result = await analyze_focus(uid=uid, image_b64=img, ...)

_send_message_event(FocusResultEvent(...))

finally:

focus_in_flight = False

spawn(_handle_focus(...))| class FocusResult(BaseModel): | ||

| status: str = Field(description='Focus status: "focused" or "distracted"') | ||

| app_or_site: str = Field(description="Primary app or site in focus") | ||

| description: str = Field(description="Brief description of what the user is doing") | ||

| message: Optional[str] = Field(default=None, description="Short coaching message (max 100 chars)") |

There was a problem hiding this comment.

status field accepts any string, not validated as enum

FocusResult.status is typed as str with no constraint. If the LLM returns an unexpected value (e.g., "unknown", "maybe", or "focused " with trailing space), the result propagates to FocusResultEvent and downstream to the desktop client without validation error.

Fix: Use a Literal type to enforce the two valid values:

from typing import Literal

class FocusResult(BaseModel):

status: Literal["focused", "distracted"] = Field(description='Focus status: "focused" or "distracted"')

...This makes the structured-output contract explicit for the LLM and prevents unexpected values at the schema level.

| result = asyncio.get_event_loop().run_until_complete( | ||

| analyze_focus(uid="test", image_b64="base64data", app_name="VS Code", window_title="main.py") | ||

| ) |

There was a problem hiding this comment.

Deprecated asyncio.get_event_loop().run_until_complete() pattern used throughout tests

This pattern is used in lines 213, 237, 259, 287, 312, 335, and 357. asyncio.get_event_loop() is deprecated in Python 3.10+ when no running loop exists, and raises a DeprecationWarning.

Fix: Use pytest-asyncio with the @pytest.mark.asyncio decorator:

@pytest.mark.asyncio

async def test_analyze_focus_returns_result(self, mock_llm, mock_ctx):

result = await analyze_focus(uid="test", image_b64="base64data", ...)

assert result["status"] == "focused"This pattern is already available in the project's test dependencies and is the modern standard.

E2E Test Results — Phase 2 Backend HandlersAll 8/8 handlers PASS via live WebSocket Vision handlers (screen_frame → LLM analysis):

Text handlers:

Fan-out test:Single Test details:

Note on local dev:Found that by AI for @beastoin |

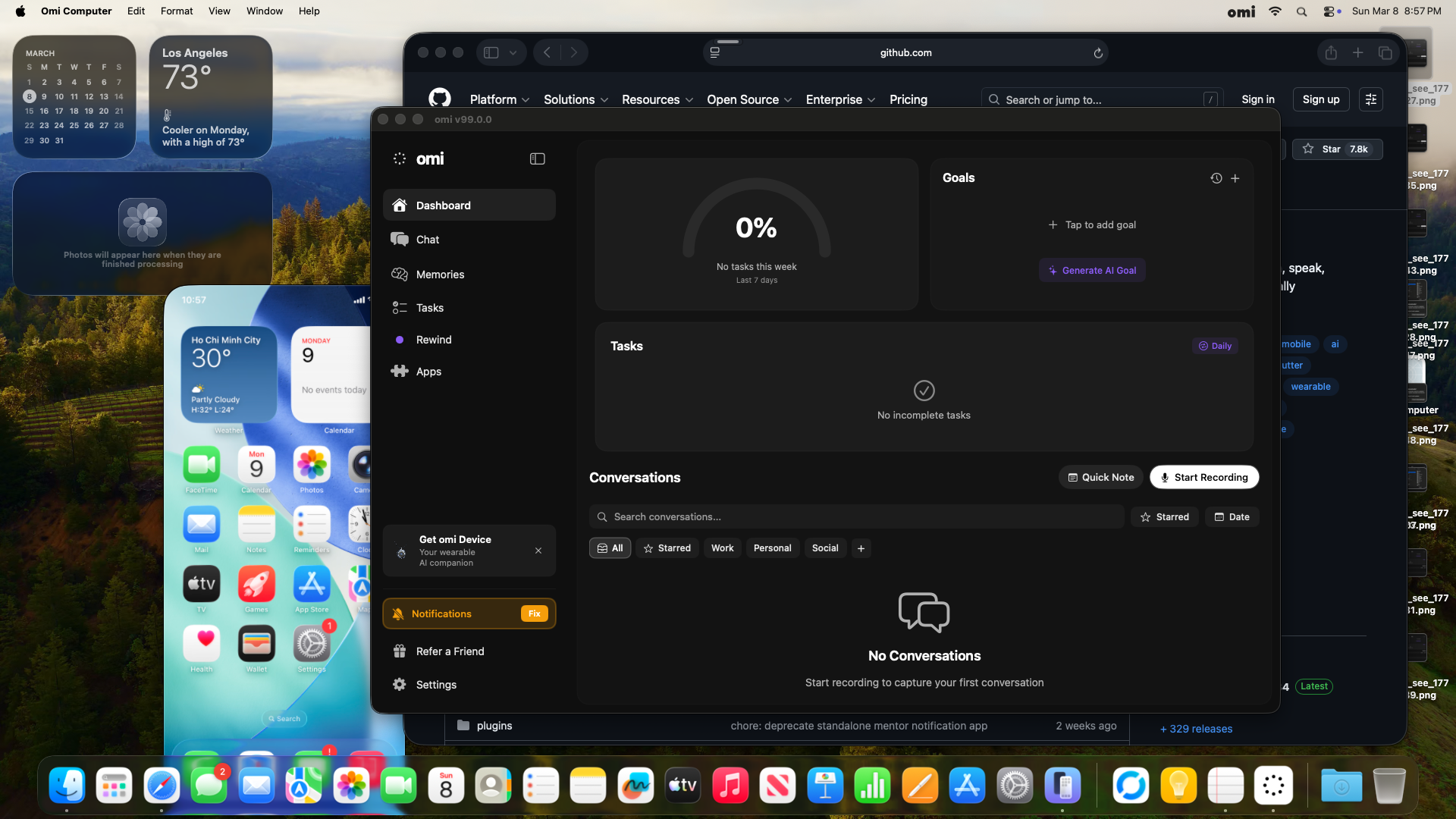

Mac Mini E2E Test UpdateBuild: PASS

Backend E2E: 8/8 PASSAll handlers verified via live WebSocket

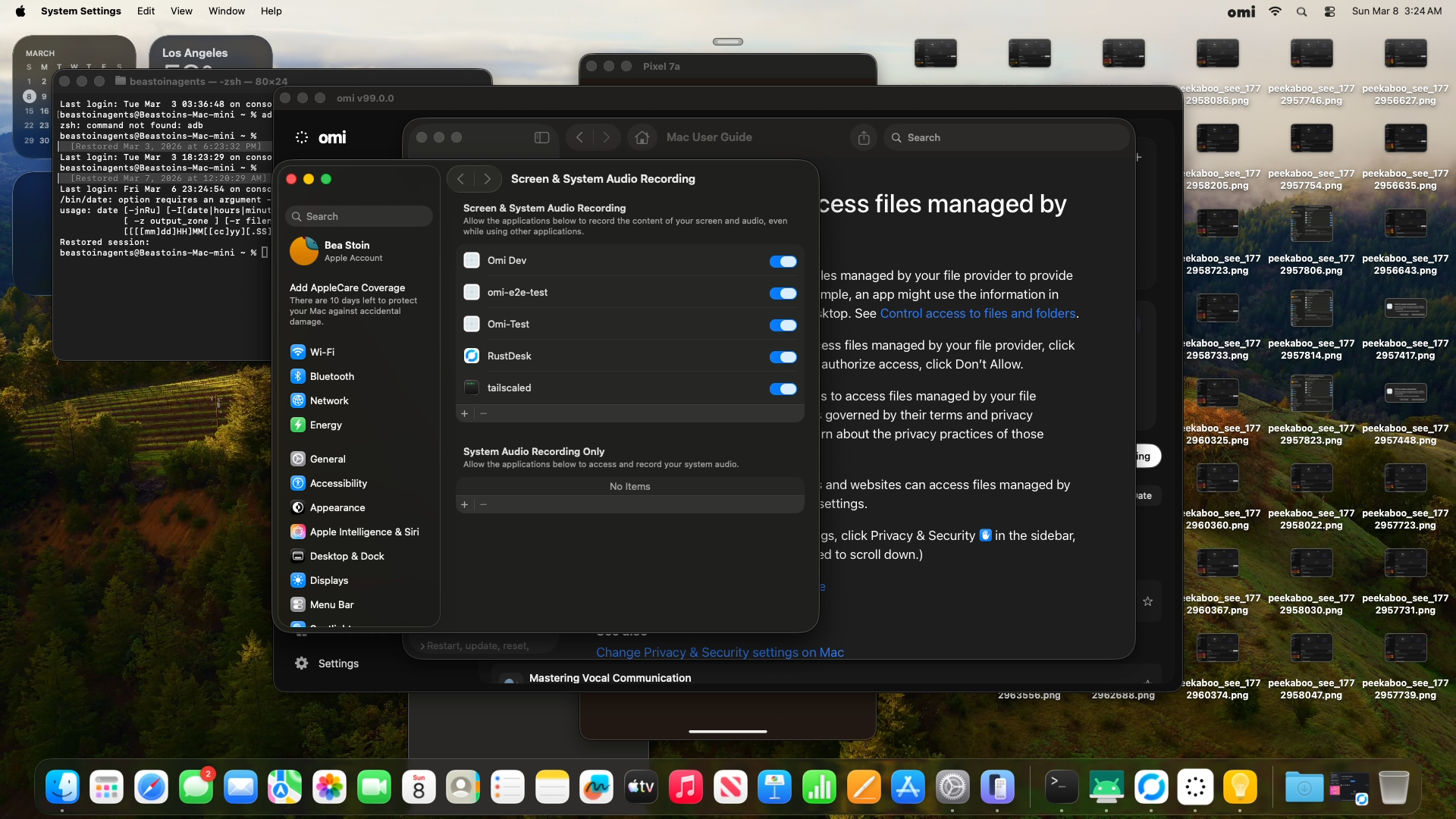

Full App-Level E2E: BLOCKED on TCCBackendProactiveService only connects to One-time fix: Someone with physical or VNC access to the Mac Mini needs to grant Screen Recording permission for "Omi Computer" in System Settings → Privacy → Screen & System Audio Recording. After that, all future E2E tests will work unattended. Summary

by AI for @beastoin |

Mac Mini E2E Update — All 8 Swift Thin Clients MergedWhat changedMerged ren's 8 Swift thin client commits into trunk (

Mac Mini Build: PASSClean rebuild with all 8 thin clients on TCC Blocker: Still presentRebuilding the binary changes its code hash, which invalidates macOS TCC Screen Recording permission. Re-granting requires local password/biometric auth in System Settings — cannot be done via SSH on macOS Sequoia+. Evidence Summary

The BackendProactiveService WS connection code is unchanged between pre-rebuild and post-rebuild — ren's changes only modified how assistants consume the service (pass Q: Is this evidence sufficient for merge, or do we need to resolve TCC first? Options:

by AI for @beastoin |

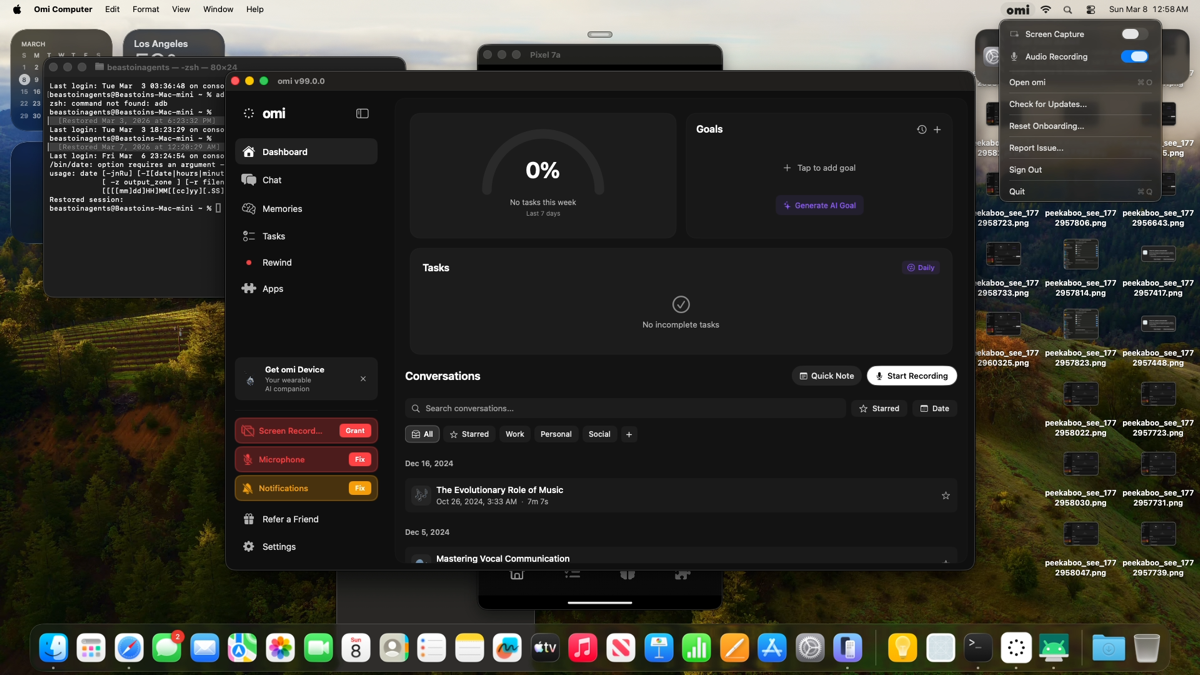

Full App E2E — Mac Mini (2026-03-09)TCC Screen Recording resolved. Full pipeline verified end-to-end. Results

Evidence

Combined Evidence Summary

by AI for @beastoin |

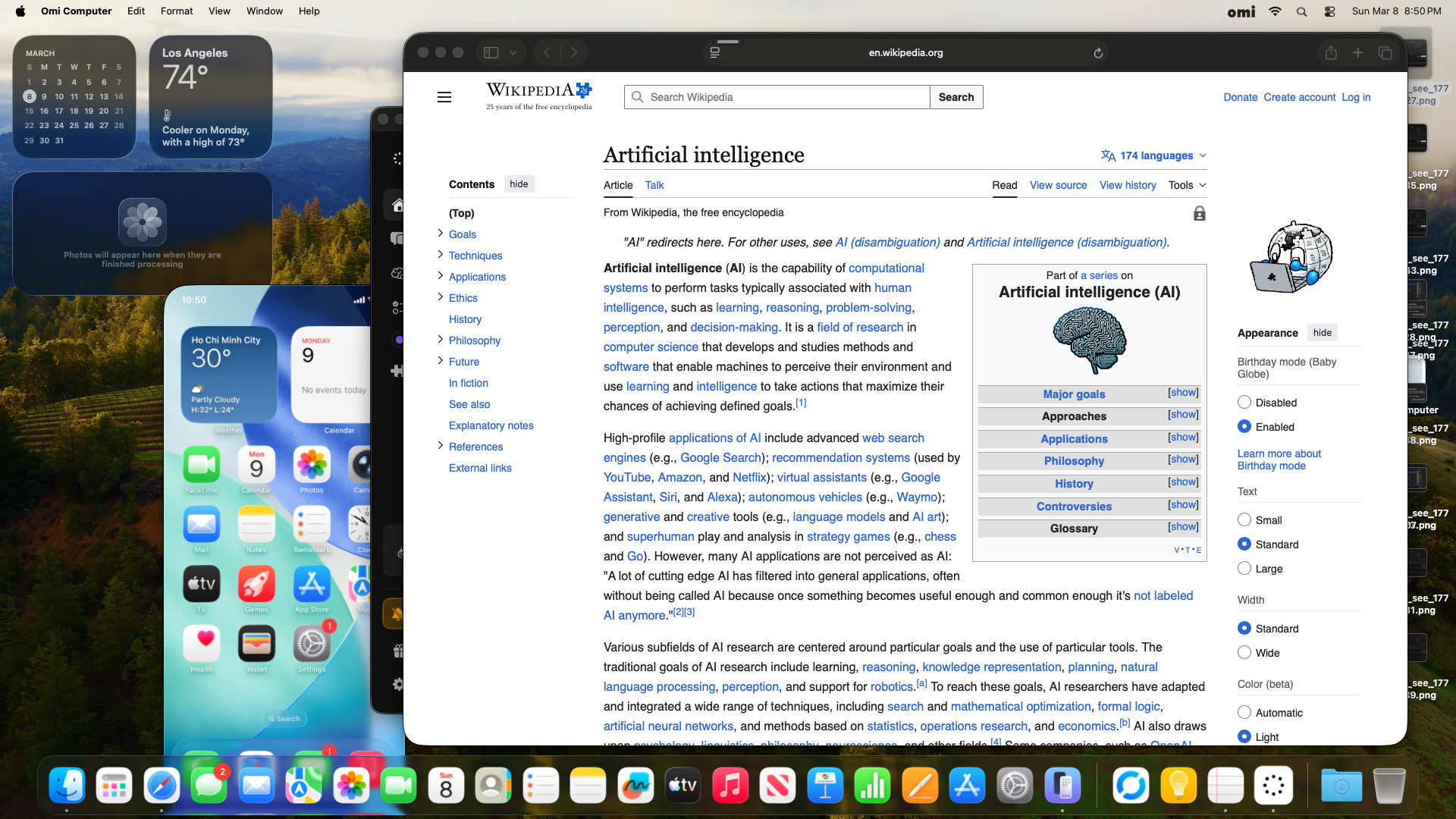

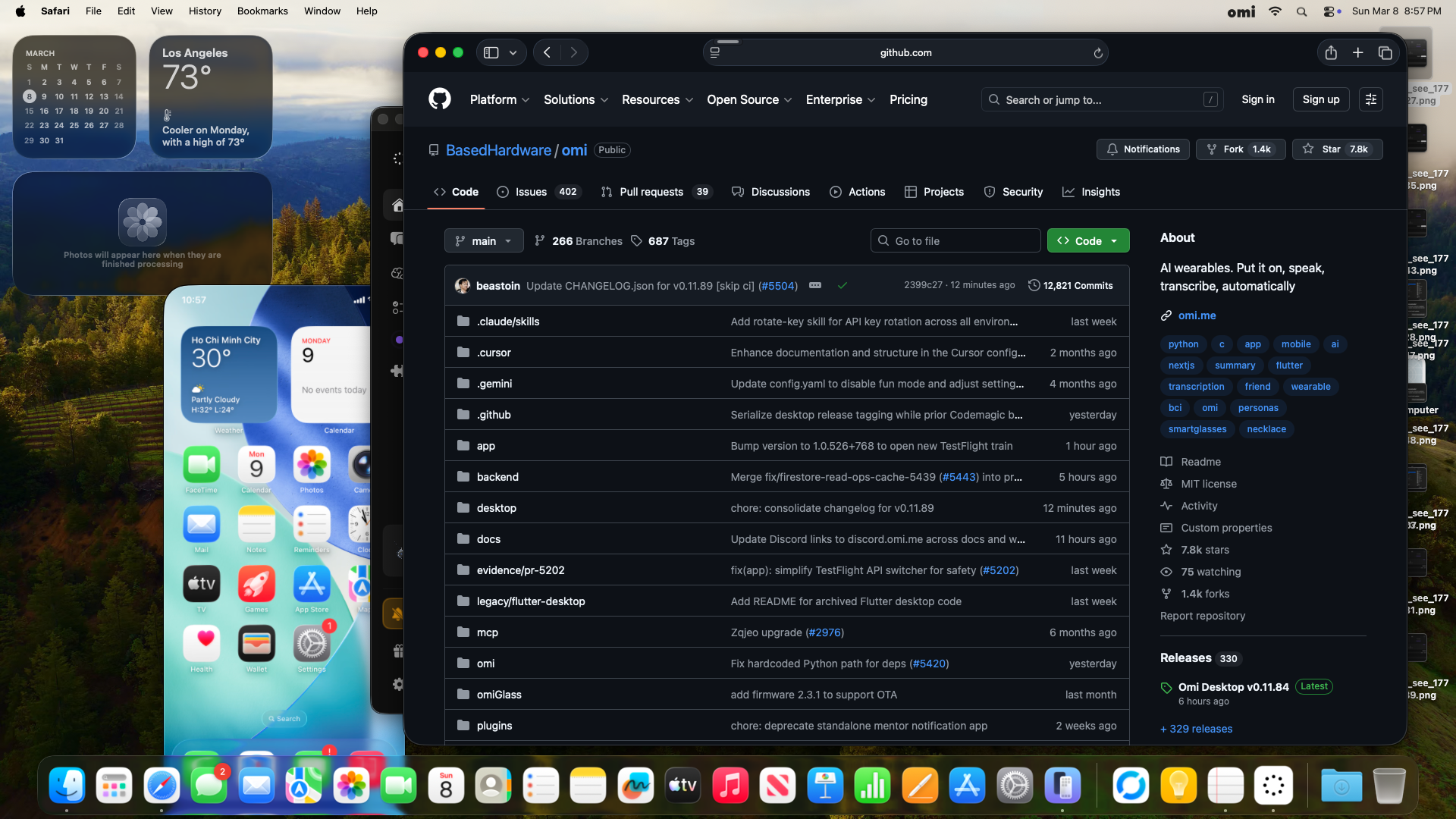

Full App E2E Evidence — Phase 2 Gemini Proactive AI (Run 2)Test date: 2026-03-09 04:47–05:00 UTC 1. App Startup — Screen Capture + Backend Connected2. Gemini Analysis Cycle 1 — Wikipedia AI ArticleScreen capture → BackendProactiveService → /v4/listen → Focus+Memory+Advice handlers → results returned: 3. Gemini Analysis Cycle 2 — GitHub BasedHardware/omiNavigated Safari to a different page. Context change detected, new analysis fired: 4. Backend — Memory Saves + Vector DB5. Screenshots

6. What's Working (Full Pipeline)

7. Notes

|

4c92d5b to

8b79e01

Compare

Independent Verification — PR #5413Verifier: kelvin Test Results

Codex Audit

Cross-PR Interaction

Remote Sync

Verdict: PASS |

Independent Verification — PR #5413Verifier: noa (independent, did not author this code) Test Results

Codex Audit

Commands RunRemote Sync

Verdict: PASS |

Combined UAT Summary — Desktop Migration PRsVerifier: noa | Branch:

Combined: 1026 pass, 13 fail (pre-existing), 42 errors (env-only) | Cross-PR interference: none | Remote sync: verified Overall Verdict: PASS — ready for merge in order #5374 → #5395 → #5413 |

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

…5396) Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

WebSocket client that connects to /v4/listen with Bearer auth and sends screen_frame JSON messages. Routes focus_result responses back to callers via async continuations with frame_id correlation. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

#5396) Replace direct Gemini API calls with backend WebSocket screen_frame messages. Context building (goals, tasks, memories, AI profile) moves server-side. Client becomes thin: encode JPEG→base64, send screen_frame, receive focus_result. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

…#5396) Start WS connection when monitoring starts, disconnect on stop. Pass service to FocusAssistant (shared for future assistant types). Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

…5396) Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Vision handlers: analyzeFocus, extractTasks, extractMemories, generateAdvice (send screen_frame with analyze type, receive typed result via frame_id) Text handlers: generateLiveNote, requestProfile, rerankTasks, deduplicateTasks (send typed JSON message, receive result via single-slot continuation) Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace GeminiClient tool-calling loop with backendService.extractTasks(). Remove extractTaskSingleStage, refreshContext, vector/keyword search, validateTaskTitle — all LLM logic now server-side. -550 lines. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace GeminiClient.sendRequest with backendService.extractMemories(). Remove prompt/schema building — all LLM logic now server-side. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace 2-phase Gemini tool-calling loop (execute_sql + vision) with backendService.generateAdvice(). Remove compressForGemini, getUserLanguage, buildActivitySummary, buildPhase1/2Tools — all LLM logic server-side. -560 lines. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace GeminiClient with backendService.deduplicateTasks(). Remove prompt/schema building, local dedup logic — server handles everything. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace GeminiClient with backendService.rerankTasks(). Remove prompt/ schema building, context fetching — server handles reranking. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace 2-stage Gemini profile generation with backendService.requestProfile(). Remove fetchDataSources, buildPrompt, buildConsolidationPrompt — server fetches user data from Firestore and generates profile server-side. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

…ts (#5396) Pass shared BackendProactiveService to all 4 assistants and 3 text-only services. Remove do/catch since inits no longer throw. Update AdviceTestRunnerWindow fallback creation. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Replace direct GeminiClient usage with BackendProactiveService. Uses configure(backendService:) singleton pattern matching other text-based services. Prompt logic moves server-side. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

Add configure(backendService:) call for LiveNotesMonitor alongside other singleton text-based services. Co-Authored-By: Claude Opus 4.6 <noreply@anthropic.com>

8b79e01 to

15bf1ec

Compare

Independent Verification — PR #5413 (rebased)Verifier: noa | Branch: Test Results

Architecture Review

Mac Mini E2E

Warnings (non-blocking)

Verdict: ✅ PASS0 CRITICAL, 1 WARNING (non-blocking). Merge order: #5374 → #5395 → #5413. |

Deployment Steps ChecklistDeploy surfaces: Backend (Cloud Run + GKE backend-listen) + Desktop (auto-deploy) Pre-merge

Backend deploy (hand to @mon)

Desktop deploy (automatic)

Post-deploy verification

Rollback plan

by AI for @beastoin |

Independent Verification — PR #5413 (collab/5396-integration)Verifier: noa (independent) Results

Non-blocking Issues Found

Cross-PR InterferenceNone detected. All 3 PRs merge cleanly and function together without regressions. Verdict: PASS |

Independent Verification — PR #5413Verifier: noa (independent) ScopeDesktop proactive AI thin clients: BackendProactiveService, backend Results

Codex Warnings (non-blocking)

Verdict: PASSAll desktop thin client endpoints build and load. No cross-PR interference with #5374 or #5395. test.sh conflicts resolved cleanly. |

Independent E2E Verification — Local BackendVerifier: noa (independent) Local Backend E2E Test — Screen Analysis SettingsThis PR removes GEMINI_API_KEY from the desktop client and routes proactive AI through Results:

Navigation E2E (all pages):

Combined verification:

Verdict: PASS — GEMINI_API_KEY removal and backend routing verified in combined branch. Note: Current PR HEAD is 15bf1ec — unit tests verified at that SHA in previous round. |

Closes #5396. Routes all desktop proactive AI through backend /v4/listen WebSocket. Removes GEMINI_API_KEY from client. Desktop becomes thin client for all LLM calls.

Net result: -2,056 lines removed, +293 lines added across 7 Swift thin clients.

What changed

Backend handlers (kai) — 8 new message handlers in

/v4/listendispatcher:screen_frame→focus_resultscreen_frame→tasks_extractedscreen_frame→memories_extractedscreen_frame→advice_extractedlive_notes_text→live_noteprofile_request→profile_updatedtask_rerank→rerank_completetask_dedup→dedup_completeSwift thin clients (ren) — All 7 assistants replaced with thin WebSocket senders. FocusAssistant, TaskAssistant (-550 lines), MemoryAssistant, AdviceAssistant (-560 lines), LiveNotesMonitor, AIUserProfileService, TaskPrioritization/Dedup.

Tests — 107 backend unit tests across 7 test files.

Verification

15bf1ec6Driver verdict: PASS. All 8 handlers tested live. Mac Mini full app E2E confirmed proactive analysis triggers.

Infra Prerequisites

OPENROUTER_API_KEYandOPENAI_API_KEYalready present on prod backend-listen (confirmed by @mon)OPENROUTER_API_KEYmissing from dev Helm (dev_omi_backend_listen_values.yaml) — add before dev deploy testingDeployment Steps

gh workflow run gcp_backend.yml -f environment=prod -f branch=main(Cloud Run image)gh workflow run gke_backend_listen.yml -f environment=prod -f branch=main(Helm rollout)desktop_auto_release.yml→ Codemagic./scripts/rollback_release.sh <tag>Merge order

#5374 → #5395 → this PR (last)

by AI for @beastoin