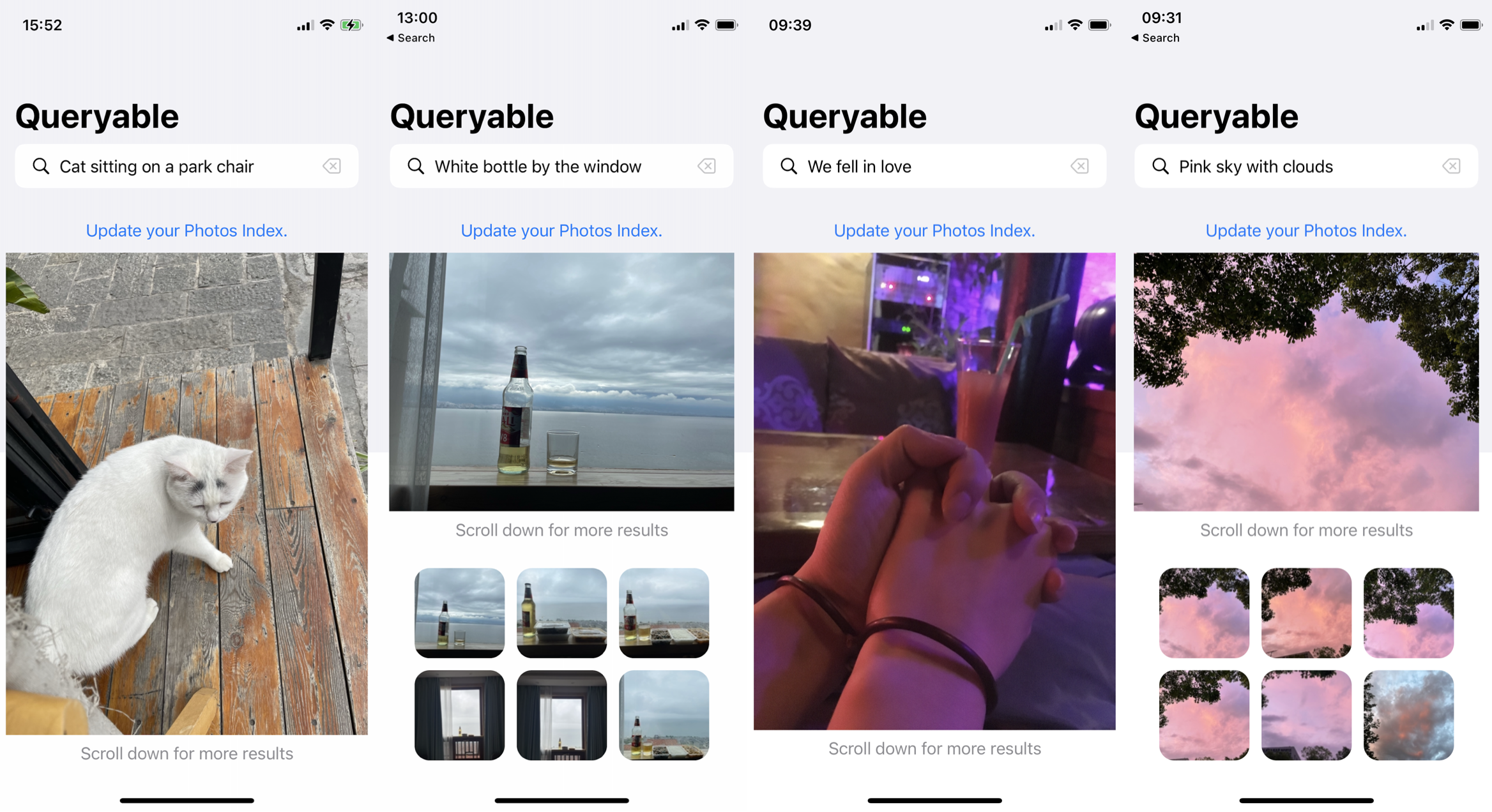

The open-source code of Queryable, an iOS app, leverages the OpenAI's CLIP model to conduct offline searches in the 'Photos' album. Unlike the category-based search model built into the iOS Photos app, Queryable allows you to use natural language statements, such as a brown dog sitting on a bench, to search your album. Since it's offline, your album privacy won't be compromised by any company, including Apple or Google.

PicQuery(Android)

The Android version(Code) developed by @greyovo, which supports both English and Chinese. See details in #12.

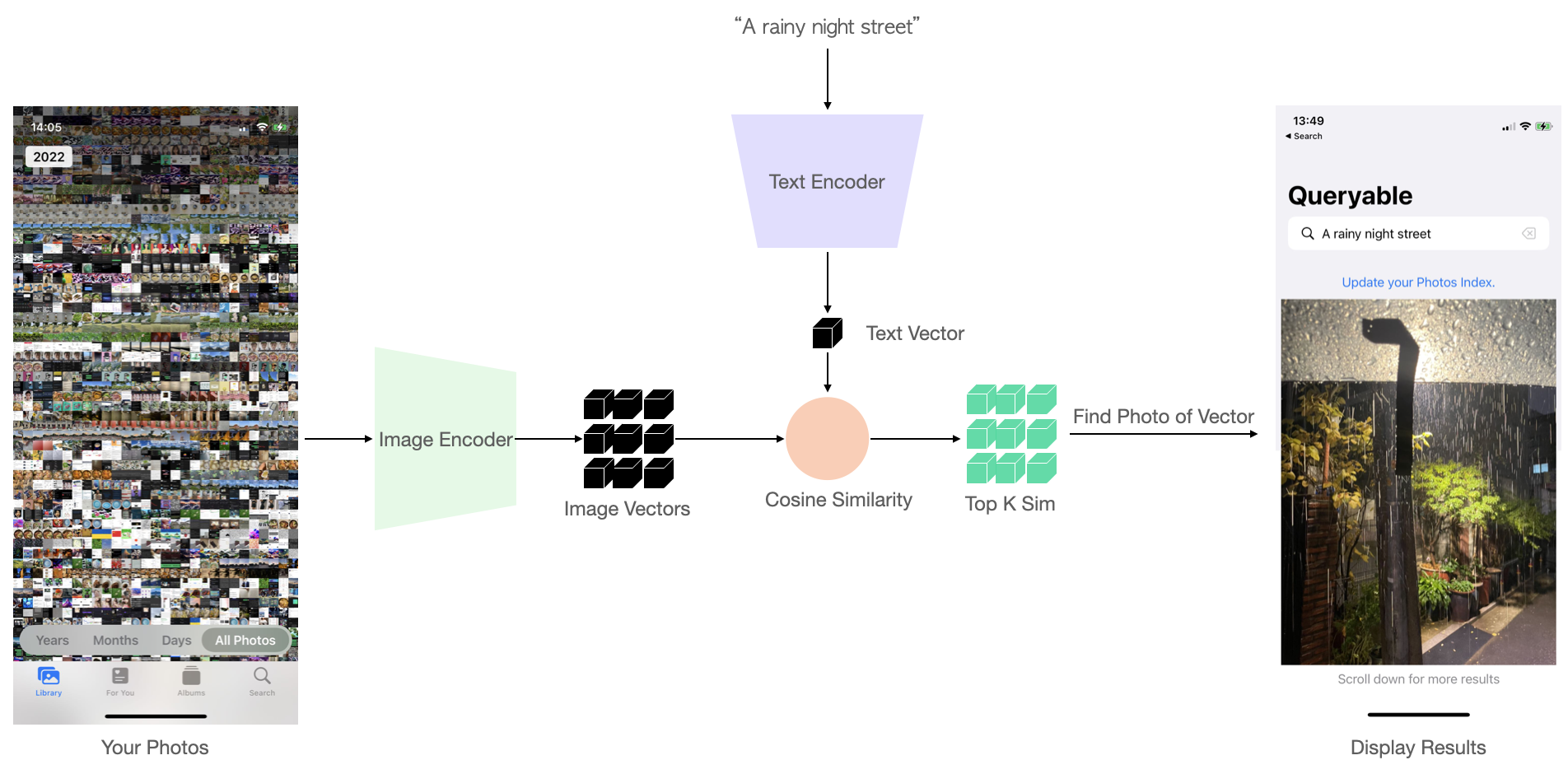

- Encode all album photos using the CLIP Image Encoder, compute image vectors, and save them.

- For each new text query, compute the corresponding text vector using the Text Encoder.

- Compare the similarity between this text vector and each image vector.

- Rank and return the top K most similar results.

The process is as follows:

For more details, please refer to my blog: Run CLIP on iPhone to Search Photos.

Download the ImageEncoder_float32.mlmodelc and TextEncoder_float32.mlmodelc from Google Drive.

Clone this repo, put the downloaded models below CoreMLModels/ path and run Xcode, it should work.

If you only want to run Queryable, you can skip this step and directly use the exported model from Google Drive. If you wish to implement Queryable that supports your own native language, or do some model quantization/acceleration work, here are some guidelines.

The trick is to separate the TextEncoder and ImageEncoder at the architecture level, and then load the model weights individually. Queryable uses the OpenAI ViT-B/32 model, and I wrote a Jupyter notebook to demonstrate how to separate, load, and export the Core ML model. The export results of the ImageEncoder's Core ML have a certain level of precision error, and more appropriate normalization parameters may be needed.

- Update (2023/09/22): Thanks to jxiong22 for providing the scripts to convert the HuggingFace version of

clip-vit-base-patch32. This has significantly reduced the precision error in the image encoder. For more details, see #18.

Disclaimer: I am not a professional iOS engineer, please forgive my poor Swift code. You may focus only on the loading, computation, storage, and sorting of the model.

You can apply Queryable to your own product, but I don't recommend simply modifying the appearance and listing it on the App Store.

If you are interested in optimizing certain aspects(such as mazzzystar#4, mazzzystar#5, mazzzystar#6, mazzzystar#10, mazzzystar#11, mazzzystar#12), feel free to submit a PR (Pull Request).

- Thanks to Chris Buguet, the issue (mazzzystar#5) where devices below iPhone 11 couldn't run has been fixed.

- greyovo has completed the Android app(mazzzystar#12) development: Google Play. The author stated that the code will be released in the future.

Thank you for your contribution : )

If you have any questions/suggestions, here are some contact methods: Discord | Twitter | Reddit: r/Queryable.

MIT License

Copyright (c) 2023 Ke Fang