DelayQueue is a message queue supporting delayed/scheduled delivery based on redis. It is designed to be reliable, scalable and easy to get started.

Core Advantages:

- Guaranteed at least once consumption

- Auto retry failed messages

- Works out of the box, Config Nothing and Deploy Nothing, A Redis is all you need.

- Natively adapted to the distributed environment, messages processed concurrently on multiple machines . Workers can be added, removed or migrated at any time

- Support Redis Cluster or clusters of most cloud service providers. see chapter Cluster

- Easy to use monitoring data exporter, see Monitoring

DelayQueue requires a Go version with modules support. Run following command line in your project with go.mod:

go get github.com/hdt3213/delayqueueif you are using

github.com/go-redis/redis/v8please usego get github.com/hdt3213/delayqueue@redisv8

package main

import (

"github.com/redis/go-redis/v9"

"github.com/hdt3213/delayqueue"

"strconv"

"time"

)

func main() {

redisCli := redis.NewClient(&redis.Options{

Addr: "127.0.0.1:6379",

})

queue := delayqueue.NewQueue("example", redisCli, func(payload string) bool {

// callback returns true to confirm successful consumption.

// If callback returns false or not return within maxConsumeDuration, DelayQueue will re-deliver this message

return true

}).WithConcurrent(4) // set the number of concurrent consumers

// send delay message

for i := 0; i < 10; i++ {

_, err := queue.SendDelayMsgV2(strconv.Itoa(i), time.Hour, delayqueue.WithRetryCount(3))

if err != nil {

panic(err)

}

}

// send schedule message

for i := 0; i < 10; i++ {

_, err := queue.SendScheduleMsgV2(strconv.Itoa(i), time.Now().Add(time.Hour))

if err != nil {

panic(err)

}

}

// start consume

done := queue.StartConsume()

<-done

}

SendScheduleMsgV2(SendDelayMsgV2) is fully compatible withSendScheduleMsg(SendDelayMsg)

Please note that redis/v8 is not compatible with redis cluster 7.x. detail

If you are using redis client other than go-redis, you could wrap your redis client into RedisCli interface

If you don't want to set the callback during initialization, you can use func

WithCallback.

By default, delayqueue instances can be both producers and consumers.

If your program only need producers and consumers are placed elsewhere, delayqueue.NewPublisher is a good option for you.

func consumer() {

queue := NewQueue("test", redisCli, cb)

queue.StartConsume()

}

func producer() {

publisher := NewPublisher("test", redisCli)

publisher.SendDelayMsg(strconv.Itoa(i), 0)

}msg, err := queue.SendScheduleMsgV2(strconv.Itoa(i), time.Now().Add(time.Second))

if err != nil {

panic(err)

}

result, err := queue.TryIntercept(msg)

if err != nil {

panic(err)

}

if result.Intercepted {

println("interception success!")

} else {

println("interception failed, message has been consumed!")

}SendScheduleMsgV2 and SendDelayMsgV2 return a structure which contains message tracking information.Then passing it to TryIntercept to try to intercept the consumption of the message.

If the message is pending or waiting to consume the interception will succeed.If the message has been consumed or is awaiting retry, the interception will fail, but TryIntercept will prevent subsequent retries.

TryIntercept returns a InterceptResult, which Intercepted field indicates whether it is successful.

func (q *DelayQueue)WithCallback(callback CallbackFunc) *DelayQueueWithCallback set callback for queue to receives and consumes messages callback returns true to confirm successfully consumed, false to re-deliver this message.

If there is no callback set, StartConsume will panic

queue := NewQueue("test", redisCli)

queue.WithCallback(func(payload string) bool {

return true

})func (q *DelayQueue)WithLogger(logger Logger) *DelayQueueWithLogger customizes logger for queue. Logger should implemented the following interface:

type Logger interface {

Printf(format string, v ...interface{})

}func (q *DelayQueue)WithConcurrent(c uint) *DelayQueueWithConcurrent sets the number of concurrent consumers

func (q *DelayQueue)WithFetchInterval(d time.Duration) *DelayQueueWithFetchInterval customizes the interval at which consumer fetch message from redis

func (q *DelayQueue)WithMaxConsumeDuration(d time.Duration) *DelayQueueWithMaxConsumeDuration customizes max consume duration

If no acknowledge received within WithMaxConsumeDuration after message delivery, DelayQueue will try to deliver this message again

func (q *DelayQueue)WithFetchLimit(limit uint) *DelayQueueWithFetchLimit limits the max number of unack (processing) messages

UseHashTagKey()UseHashTagKey add hashtags to redis keys to ensure all keys of this queue are allocated in the same hash slot.

If you are using Codis/AliyunRedisCluster/TencentCloudRedisCluster, you should add this option to NewQueue: NewQueue("test", redisCli, cb, UseHashTagKey()). This Option cannot be changed after DelayQueue has been created.

WARNING! CHANGING(add or remove) this option will cause DelayQueue failing to read existed data in redis

see more: https://redis.io/docs/reference/cluster-spec/#hash-tags

WithDefaultRetryCount(count uint) *DelayQueueWithDefaultRetryCount customizes the max number of retry, it effects of messages in this queue

use WithRetryCount during DelayQueue.SendScheduleMsg or DelayQueue.SendDelayMsg to specific retry count of particular message

queue.SendDelayMsg(msg, time.Hour, delayqueue.WithRetryCount(3))WithNackRedeliveryDelay(d time.Duration) *DelayQueueWithNackRedeliveryDelay customizes the interval between redelivery and nack (callback returns false) But if consumption exceeded deadline, the message will be redelivered immediately.

(q *DelayQueue) WithScriptPreload(flag bool) *DelayQueueWithScriptPreload(true) makes DelayQueue preload scripts and call them using EvalSha to reduce communication costs. WithScriptPreload(false) makes DelayQueue run scripts by Eval commnand. Using preload and EvalSha by Default

queue := delayqueue.NewQueue("example", redisCli, callback, UseCustomPrefix("MyPrefix"))All keys of delayqueue has a smae prefix, dp by default. If you want to modify the prefix, you could use UseCustomPrefix.

We provides Monitor to monitor the running status.

monitor := delayqueue.NewMonitor("example", redisCli)Monitor.ListenEvent can register a listener that can receive all internal events, so you can use it to implement customized data reporting and metrics.

The monitor can receive events from all workers, even if they are running on another server.

type EventListener interface {

OnEvent(*Event)

}

// returns: close function, error

func (m *Monitor) ListenEvent(listener EventListener) (func(), error) The definition of event could be found in events.go.

Besides, We provide a demo that uses EventListener to monitor the production and consumption amount per minute.

The complete demo code can be found in example/monitor.

type MyProfiler struct {

List []*Metrics

Start int64

}

func (p *MyProfiler) OnEvent(event *delayqueue.Event) {

sinceUptime := event.Timestamp - p.Start

upMinutes := sinceUptime / 60

if len(p.List) <= int(upMinutes) {

p.List = append(p.List, &Metrics{})

}

current := p.List[upMinutes]

switch event.Code {

case delayqueue.NewMessageEvent:

current.ProduceCount += event.MsgCount

case delayqueue.DeliveredEvent:

current.DeliverCount += event.MsgCount

case delayqueue.AckEvent:

current.ConsumeCount += event.MsgCount

case delayqueue.RetryEvent:

current.RetryCount += event.MsgCount

case delayqueue.FinalFailedEvent:

current.FailCount += event.MsgCount

}

}

func main() {

queue := delayqueue.NewQueue("example", redisCli, func(payload string) bool {

return true

})

start := time.Now()

// IMPORTANT: EnableReport must be called so monitor can do its work

queue.EnableReport()

// setup monitor

monitor := delayqueue.NewMonitor("example", redisCli)

listener := &MyProfiler{

Start: start.Unix(),

}

monitor.ListenEvent(listener)

// print metrics every minute

tick := time.Tick(time.Minute)

go func() {

for range tick {

minutes := len(listener.List)-1

fmt.Printf("%d: %#v", minutes, listener.List[minutes])

}

}()

}Monitor use redis pub/sub to collect data, so it is important to call DelayQueue.EnableReport of all workers, to enable events reporting for monitor.

If you do not want to use redis pub/sub, you can use DelayQueue.ListenEvent to collect data yourself.

Please be advised, DelayQueue.ListenEvent can only receive events from the current instance, while monitor can receive events from all instances in the queue.

Once DelayQueue.ListenEvent is called, the monitor's listener will be overwritten unless EnableReport is called again to re-enable the monitor.

You could get Pending Count, Ready Count and Processing Count from the monitor:

func (m *Monitor) GetPendingCount() (int64, error) GetPendingCount returns the number of which delivery time has not arrived.

func (m *Monitor) GetReadyCount() (int64, error)GetReadyCount returns the number of messages which have arrived delivery time but but have not been delivered yet

func (m *Monitor) GetProcessingCount() (int64, error)GetProcessingCount returns the number of messages which are being processed

If you are using Redis Cluster, please use NewQueueOnCluster

redisCli := redis.NewClusterClient(&redis.ClusterOptions{

Addrs: []string{

"127.0.0.1:7000",

"127.0.0.1:7001",

"127.0.0.1:7002",

},

})

callback := func(s string) bool {

return true

}

queue := NewQueueOnCluster("test", redisCli, callback)If you are using transparent clusters, such as codis, twemproxy, or the redis of cluster architecture on aliyun, tencentcloud,

just use NewQueue and enable hash tag

redisCli := redis.NewClient(&redis.Options{

Addr: "127.0.0.1:6379",

})

callback := func(s string) bool {

return true

}

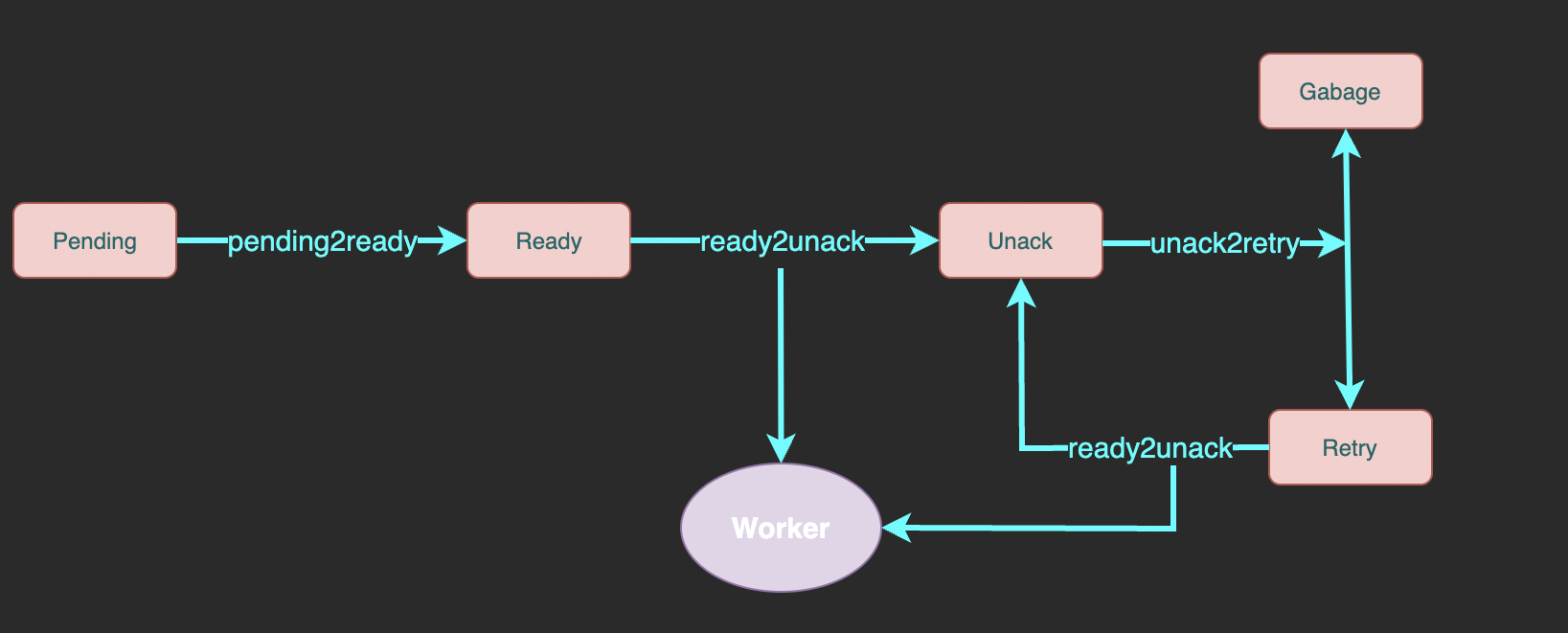

queue := delayqueue.NewQueue("example", redisCli, callback, UseHashTagKey())Here is the complete flowchart:

- pending: A sorted set of messages pending for delivery.

memberis message id,scoreis delivery unix timestamp. - ready: A list of messages ready to deliver. Workers fetch messages from here.

- unack: A sorted set of messages waiting for ack (successfully consumed confirmation) which means the messages here is being processing.

memberis message id,scoreis the unix timestamp of processing deadline. - retry: A list of messages which processing exceeded deadline and waits for retry

- garbage: A list of messages reaching max retry count and waits for cleaning