Extending SSL patches spatial relations in Vision Transformers for object detection and instance segmentation tasks

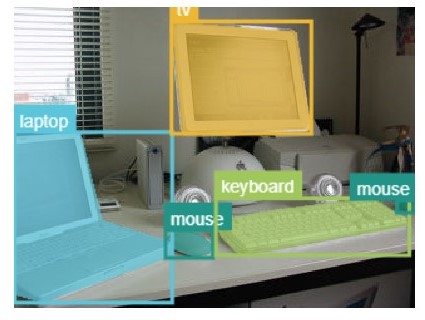

Vision Transformer (ViT) architecture has become a de-facto standard in computer vision, achieving state-of-the-art performances in various tasks. This popularity is given by a remarkable computational efficiency and its global processing self-attention mechanism. However, in contrast with convolutional neural networks (CNNs), ViTs require large amounts of data to improve their generalization ability. In particular, for small datasets, their lack of inductive bias (i.e. translational equivariance, locality) can lead to poor results. To overcome the issue, SSL techniques based on the understanding of spatial relations among image patches without human annotations (e.g. positions, angles and euclidean distances) are extremely useful and easy to integrate in ViTs architecture. The correspondent model, dubbed RelViT, showed to improve overall image classification accuracy, optimizing tokens encoding and providing new visual representation of the data. This work proves the effectiveness of SSL strategies also for object detection and instance segmentation tasks. RelViT outperforms standard ViT architecture on multiple datasets in the majority of the related benchmarking metrics. In particular, training on a small subset of COCO, results showed a gain of +2.70%, +2.20% in mAP for instance segmentation and object detection respectively. Moreover, RelViT experiments with different ViT-based architectures (i.e. Swin-ViT and T2T-ViT) showed to achieve higher results in all mAP metrics on Kitti dataset.