Website | Position Paper | Benchmark Paper | Mailing List

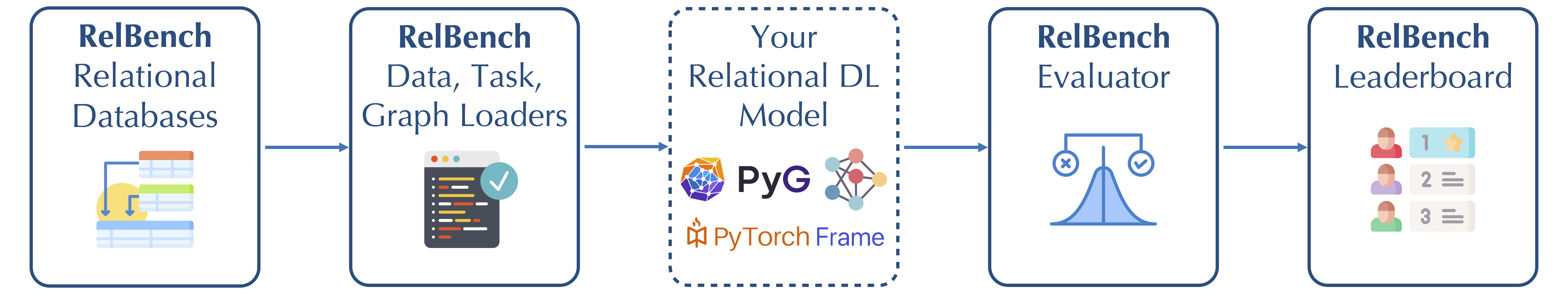

Relational Deep Learning is a new approach for end-to-end representation learning on data spread across multiple tables, such as in a relational database (see our position paper). Relational databases are the world's most widely used data management system, and are used for industrial and scientific purposes across many domains. RelBench is a benchmark designed to facilitate efficient, robust and reproducible research on end-to-end deep learning over relational databases.

RelBench contains 7 realistic, large-scale, and diverse relational databases spanning domains including medical, social networks, e-commerce and sport. Each database has multiple predictive tasks (30 in total) defined, each carefully scoped to be both challenging and of domain-specific importance. It provides full support for data downloading, task specification and standardized evaluation in an ML-framework-agnostic manner.

Additionally, RelBench provides a first open-source implementation of a Graph Neural Network based approach to relational deep learning. This implementation uses PyTorch Geometric to load the data as a graph and train GNN models, and PyTorch Frame for modeling tabular data. Finally, there is an open leaderboard for tracking progress.

RelBench: A Benchmark for Deep Learning on Relational Databases

This paper details our approach to designing the RelBench benchmark. It also includes a key user study showing that relational deep learning can produce performant models with a fraction of the manual human effort required by typical data science pipelines. This paper is useful for a detailed understanding of RelBench and our initial benchmarking results. If you just want to quickly familiarize with the data and tasks, the website is a better place to start.

This paper outlines our proposal for how to do end-to-end deep learning on relational databases by combining graph neural networsk with deep tabular models. We reccomend reading this paper if you want to think about new methods for end-to-end deep learning on relational databases. The paper includes a section on possible directions for future research to give a snapshot of some of the research possibilities there are in this area.

RelBench has the following main components:

- 7 databases with a total of 30 tasks; both of these automatically downloadable for ease of use

- Easy data loading, and graph construction from pkey-fkey links

- Your own model, which can use any deep learning stack since RelBench is framework-agnostic. We provide a first model implementation using PyTorch Geometric and PyTorch Frame.

- Standardized evaluators - all you need to do is produce a list of predictions for test samples, and RelBench computes metrics to ensure standardized evaluation

- A leaderboard you can upload your results to, to track SOTA progress.

You can install RelBench using pip:

pip install relbenchThis will allow usage of the core RelBench data and task loading functionality.

To additionally use relbench.modeling, which requires PyTorch, PyTorch Geometric and PyTorch Frame, install these dependencies manually or do:

pip install relbench[full]For the scripts in the examples directory, use:

pip install relbench[example]Then, to run a script:

git clone https://github.com/snap-stanford/relbench

cd relbench/examples

python gnn_node.py --dataset rel-f1 --task driver-positionThis section provides a brief overview of using the RelBench package. For a more in-depth coverage see the Tutorials section. For detailed documentations, please see the code directly.

Imports:

from relbench.base import Table, Database, Dataset, EntityTask

from relbench.datasets import get_dataset

from relbench.tasks import get_taskGet a dataset, e.g., rel-amazon:

dataset: Dataset = get_dataset("rel-amazon", download=True)Details on downloading and caching behavior.

RelBench datasets (and tasks) are cached to disk (usually at ~/.cache/relbench). If not present in cache, download=True downloads the data, verifies it against the known hash, and caches it. If present, download=True performs the verification and avoids downloading if verification succeeds. This is the recommended way.

download=False uses the cached data without verification, if present, or processes and caches the data from scratch / raw sources otherwise.

For faster download, please see this.

dataset consists of a Database object and temporal splitting times dataset.val_timestamp and dataset.test_timestamp.

To get the database:

db: Database = dataset.get_db()Preventing temporal leakage

By default, rows with timestamp > dataset.test_timestamp are excluded to prevent accidental temporal leakage. The full database can be obtained with:

full_db: Database = dataset.get_db(upto_test_timestamp=False)Various tasks can be defined on a dataset. For example, to get the user-churn task for rel-amazon:

task: EntityTask = get_task("rel-amazon", "user-churn", download=True)A task provides train/val/test tables:

train_table: Table = task.get_table("train")

val_table: Table = task.get_table("val")

test_table: Table = task.get_table("test")Preventing test leakage

By default, the target labels are hidden from the test table to prevent accidental data leakage. The full test table can be obtained with:full_test_table: Table = task.get_table("test", mask_input_cols=False)You can build your model on top of the database and the task tables. After training and validation, you can make prediction from your model on the test table. Suppose your prediction test_pred is a NumPy array following the order of task.test_table, you can call the following to get the evaluation metrics:

task.evaluate(test_pred)Additionally, you can evaluate validation (or training) predictions as such:

task.evaluate(val_pred, val_table)| Notebook | Try on Colab | Description |

|---|---|---|

| load_data.ipynb | Load and explore RelBench data | |

| train_model.ipynb | Train your first GNN-based model on RelBench | |

| custom_dataset.ipynb | Use your own data in RelBench | |

| custom_task.ipynb | Define your own ML tasks in RelBench |

Please check out CONTRIBUTING.md if you are interested in contributing datasets, tasks, bug fixes, etc. to RelBench.

If you use RelBench in your work, please cite our position and benchmark papers:

@inproceedings{rdl,

title={Position: Relational Deep Learning - Graph Representation Learning on Relational Databases},

author={Fey, Matthias and Hu, Weihua and Huang, Kexin and Lenssen, Jan Eric and Ranjan, Rishabh and Robinson, Joshua and Ying, Rex and You, Jiaxuan and Leskovec, Jure},

booktitle={Forty-first International Conference on Machine Learning}

}@misc{relbench,

title={RelBench: A Benchmark for Deep Learning on Relational Databases},

author={Joshua Robinson and Rishabh Ranjan and Weihua Hu and Kexin Huang and Jiaqi Han and Alejandro Dobles and Matthias Fey and Jan E. Lenssen and Yiwen Yuan and Zecheng Zhang and Xinwei He and Jure Leskovec},

year={2024},

eprint={2407.20060},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2407.20060},

}